SIGGRAPH Asia 2012 Talk: Lip-Synced...

animationdigimaxsiggraph

In SIGGRAPH Asia 2012, I addressed a talk about Lip-Synced Character Speech Animation with Dominated Animeme Models, a topic that is cooperated with National Taiwan University for around three years. It’s a work of lip-sync animation, part of facial animations. The idea is to synthesize lip-synced character speech animation in real time given a speech sequence (audio) and its corresponding analyized texts (phonemes actually). The talk went well even though I didn’t have well-prepared presenting scripts.

Here is a photo of presenters in the same session, facial animation, organized by Prof. Ming Ouhyoung. Unfortunately, we lost Prof. Paul Debevec, who is supposed in another sessions. It’s my honor giving a talk in the same session with those people, and it’s interesting to see what NextMedia Animation has done. You never know what’s gonna happen in SIGGRAPH.

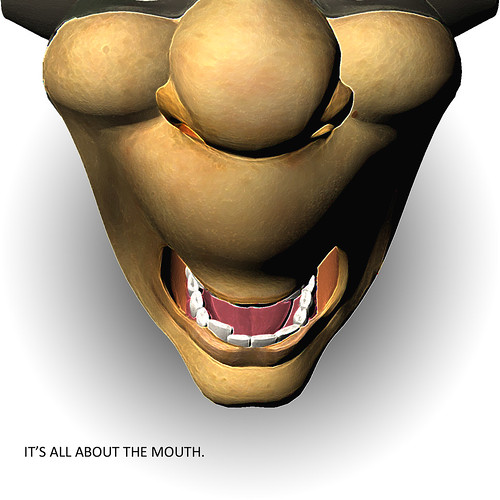

Yes, it’s all about mouth!